suai.ru/our-contacts

4 J. R. Busemeyer and Z. J. Wang

|

P |

y |

) |

S |

|

0 |

|

|

|

0 |

|

|

|

Sy = |

|

WB ( |

|

R |

|

|

|

|

|

|

|

|

|

|

|

= |

|

|

/ .6 |

= |

|

. |

|

|

|

|

|

|

|

|

|

|

p(WB = y) |

|

.6 |

|

|

1 |

with respect to the WB basis. Now, after answering “yes,” if asked again, the person is certain to say “yes” to the WB question.

What about the BW question? To represent these answers, we need to rotate from the original basis {Vn, Vy} used to represent the WB question to a new basis {Un, Uy} that provides this BW perspective. Suppose that the basis vectors used to represent the BW answers are obtained from the WB basis vectors by the unitary matrix

UBW |

.8090 |

−.5878 |

|

= |

.8090 |

. |

|

.5878 |

|

.8090 |

|

The first column of U, i.e., Un = |

, represents |

.5878 |

|

the basis vector for “no;” the second column, i.e.,

−.5878

Uy = .8090 , represents the basis vector for “yes” for

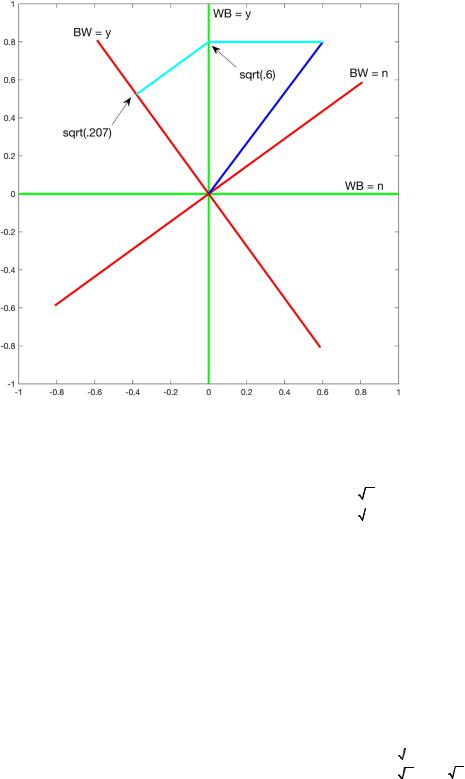

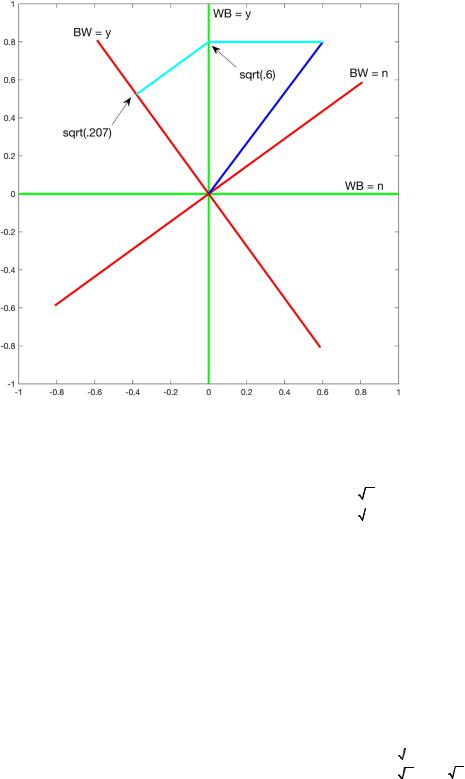

the BW question (see red lines in Figure 1).

Then the projectors for the answers to the BW question equal

P |

y |

) |

= U |

P |

y |

) |

U† |

|

, |

|

|

|

BW ( |

|

|

( |

BW |

WB ( |

|

|

BW ) |

|

|

|

|

|

|

|

|

.8090 |

−.5878 |

|

0 |

0 |

.8090 |

.5878 |

|

|

|

= |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

.5878 |

.8090 |

|

0 |

1 |

−.5878 |

.8090 |

|

|

|

|

.3455 |

−.4755 |

, |

|

|

|

|

|

|

|

= |

−.4755 |

.6545 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

† |

|

.6545 |

.4755 |

PBW (n) = (UBW PWB (n) UBW ) |

= |

|

|

. |

|

|

|

|

|

|

|

|

|

|

|

|

|

.4755 |

.3455 |

If the person first said “yes” to the WB question, then the conditional probabilities of each answer to the BW question equal

p(BW = y|WB = y) =

PBW (y) ·Sy

PBW (y) ·Sy

2 = .6545,

2 = .6545,

p(BW = n|WB = y) =

PBW (n) ·Sy

PBW (n) ·Sy

2 = .3455.

2 = .3455.

Note that if the person answers “yes” to the WB question (so that the state has collapsed to Sy, and the person is certain to say “yes” if asked again about the WB question), then the person must be uncertain about the BW question, because the state Sy has non zero projections on both of the BW events. This illustrates how the quantum uncertainty principle arises. Being certain about one event (the answer to WB is yes) must make one uncertain about a different, incompatible event (the answer to BW is uncertain). A person cannot be certain about both incompatible measurements at the same time.

Finally the sequential probability of answering “yes” to the WB question and then “no” to the BW question equals (see the arrow associated with sqrt(.207) in Figure 1)

quantum machine learning

p(WB = y,BW = n) = p(WB = y) · p(BW = n|WB = y)

=

PWB (y) SR

PWB (y) SR

2

2

PBW (n) Sy

PBW (n) Sy

2

2

=

PBW (n) PWB (y) SR

PBW (n) PWB (y) SR

2 = .2073.

2 = .2073.

The opposite order produces

p(BW = n,WB = y) = p(BW = n) · p(WB = y|BW = n)

=

PWB (y) PBW (n) SR

PWB (y) PBW (n) SR

2 = .3231.

2 = .3231.

This produces an order effect.

Now we turn to the general model. We assume that events are represented as subspaces of a finite dimensional Hilbert space H. A finite dimensional Hilbert space is a vector space defined on a complex field endowed with an inner product. The dimension of the vector space can be arbitrary, say N −dimensional. The state representing the beliefs of a person is a vector |S H. A projector for an event such as the answer “yes” to question A is an linear operator PA(y) in the Hilbert

space that satisfies PA(y) = PA(y)† = PA(y) · PA(y). The projector for the complement, i.e., the answer to ques-

tion A is “no”, is PA(n) = I − PA(y), where I is the identify operator, and note that PA(y) · PA(n) = 0. If question A is asked before question B, then we denote the probability of observing the answer “yes” to question A (e.g., the WB question) and then the answer “no” to question B (e.g., the BW question) as p(A = y, B = n). The opposite order is denoted p(B = n, A = y). Then the general model for question order states simply that

p(A = y,B = n) =

PB (n) ·PA (y) · S

PB (n) ·PA (y) · S

2 ,

2 ,

p(B = n,A = y) =

PA (y) ·PB (n) · S

PA (y) ·PB (n) · S

2 .

2 .

If we condition on the AB order, then the 2 × 2 joint frequencies for the A,B pair of questions can be described as a classical joint probability distribution; likewise. if we condition on BA order, then the 2 × 2 joint frequencies for the A,B pair of questions also can be described as a classical probability distribution. This produces two classical joint distributions that can perfectly describe the empirical results. But these are two separate and unrelated distributions, which simply reproduce the empirical results. The advantage of the quantum probability model comes from providing a mathematical system that relates the two different joint distributions and makes a priori predictions about this relationship. Wang & Busemeyer (2013) proved the following theorem that makes an a priori prediction for any dimension N, and for any projectors representing questions A, B. The quantum probability model must predict a very special pattern of order effects that we call the QQ equality (Wang & Busemeyer, 2013):

Q= (p(A = y,B = n) + p(A = n,B = y))

− (p(B = y,A = n) + p(B = n,A = y)) = 0